So you can safely ignore the other blog posts on the internet which provide some custom workaround or connector for ADF and Snowflake. And please note that ADF will only connect to Snowflake accounts in Azure.Īs mentioned above, with this second release we can finally put all of those clunky workarounds behind us. All you need to do is create a Linked Service to Snowflake, create a new pipeline, and then begin using one or more of the three activities outlined above to interact with Snowflake. Getting started with ADF and Snowflake is now super easy. For an announcement of the new Script activity, including a nice comparison of the Lookup and Script activities, see the ADF team’s recent blog post. It’s with this new activity that customers are now able to create end-to-end pipelines with Snowflake. This gives users the flexibility to transform data they have loaded into Snowflake while pushing all the compute into Snowflake. And importantly, this activity can be used to execute data manipulation language (DML) statements and data definition language (DDL) statements, as well as execute stored procedures. This newly released Script activity in ADF provides the much anticipated capability to run a series of SQL commands against Snowflake. The third activity to consider in ADF is the new Script activity.

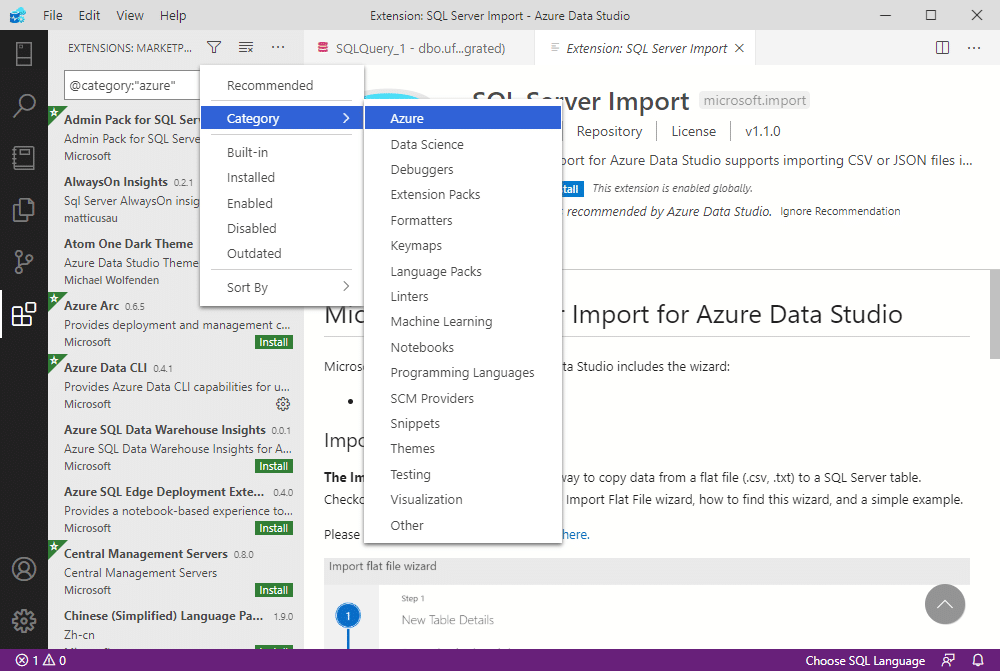

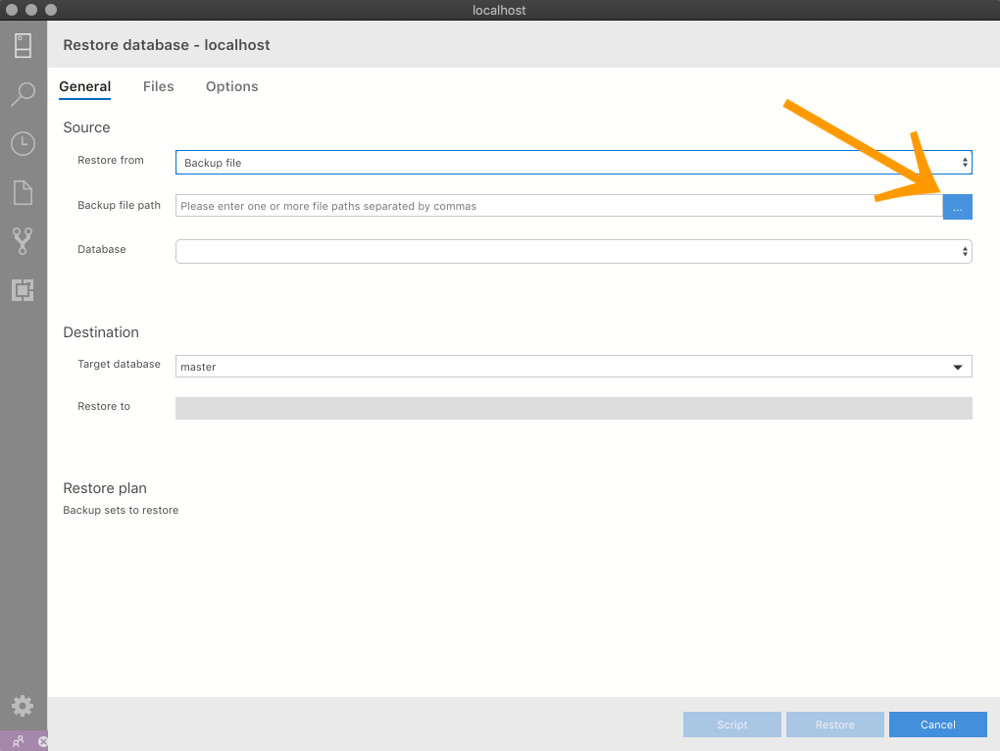

If you are trying to execute a stored procedure to modify data, then consider the Script activity, discussed next. While you are able to call a stored procedure from the Lookup activity, Microsoft does not recommend that you use the Lookup activity to call a stored procedure to modify data. The primary purpose of the Lookup activity is to read metadata from configuration files and tables, which can then be used in subsequent activities to create dynamic, metadata-driven pipelines. The Lookup activity is able to retrieve a small number of records from any of the supported data sources in ADF. The second activity to consider in ADF is the Lookup activity. For the complete list of supported data sources, see the ADF Copy activities supported source/sink matrix table. With this activity you can ingest data from almost any data source into Snowflake with ease. Snowflake can be used both as a source or a sink in the Copy activity. The Copy activity provides more than 90 different connectors to data sources, including Snowflake. Its job is to copy data from one data source (called a source) to another data source (called a sink). The Copy activity is the main workhorse in an ADF pipeline. The native Snowflake connector for ADF currently supports these main activities: For more information on ADF’s native support for Snowflake, please check out ADF’s Snowflake Connector page. This updated blog post will outline the native Snowflake support offered by ADF and offer a few suggestions for getting started. But with this second release we can finally put all of those clunky workarounds behind us. In fact, the original version of this blog post offered one such approach. Over the years there have been many different approaches to integrating ADF with Snowflake, but they have all had their share of challenges and limitations. And with this recent second release, Azure Snowflake customers are now able to use ADF as their end-to-end data integration tool with relative ease. Native ADF support for Snowflake has come primarily through two main releases, the first on June 7, 2020, and the second on March 2, 2022.

Since September 26, 2018, when Snowflake announced the general availability of its service on the Azure public cloud, the question that I have been asked the most by Azure customers is how to best integrate ADF with Snowflake. It has been updated to reflect currently available features and functionality.Īzure Data Factory (ADF) is Microsoft’s fully managed, serverless data integration service.

PLEASE NOTE: This post was originally published in 2019.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed